normalizing flows vs 直方图规定化

- normalizing flows名字的由来

The base density

P

(

z

)

P(z)

P(z) is usually defined as a multivariate standard normal (i.e., with

mean zero and identity covariance). Hence, the effect of each subsequent inverse layer is

to gradually move or “flow” the data density toward this normal distribution (figure 16.4).

This gives rise to the name “normalizing flows.”

因此,应该翻译为正态化流。

- 描述

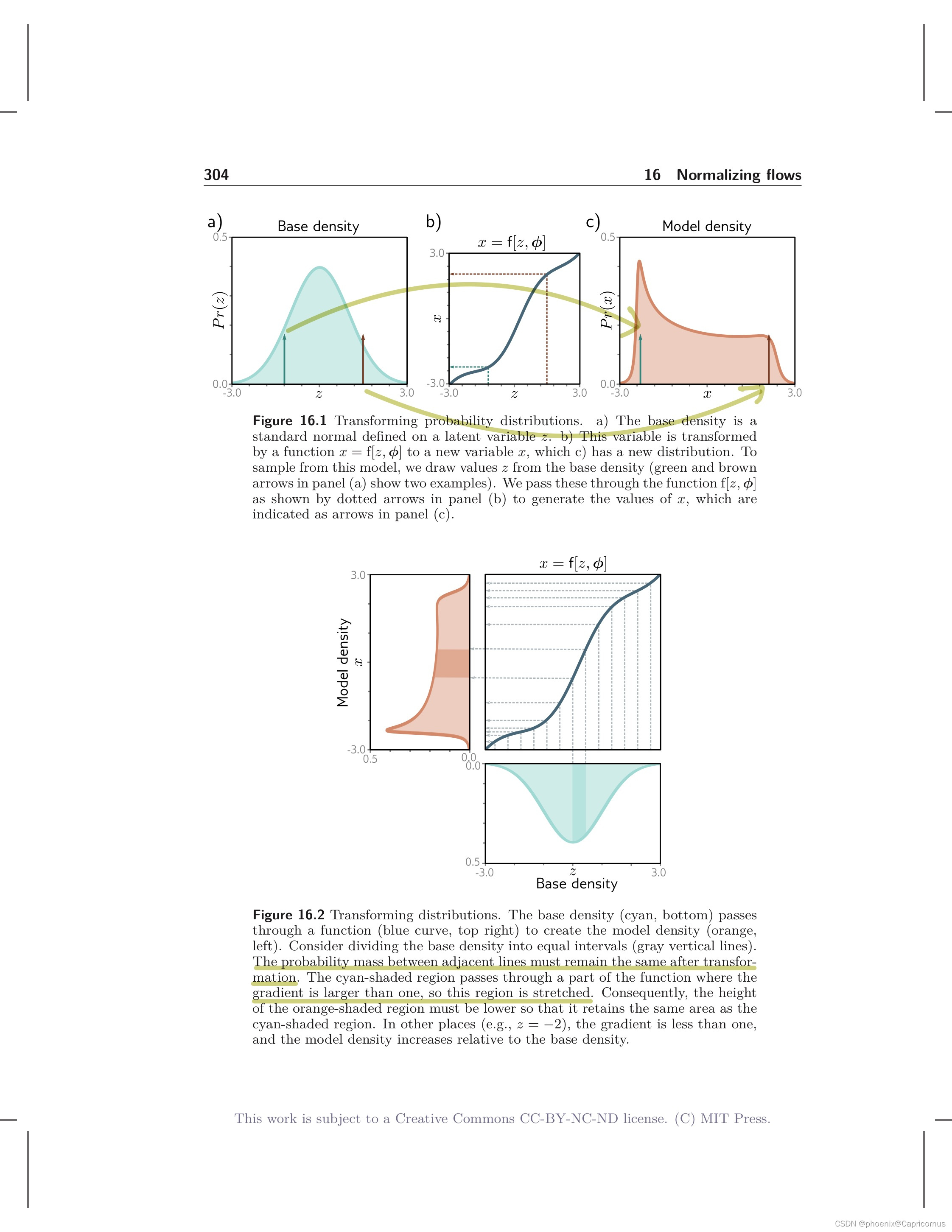

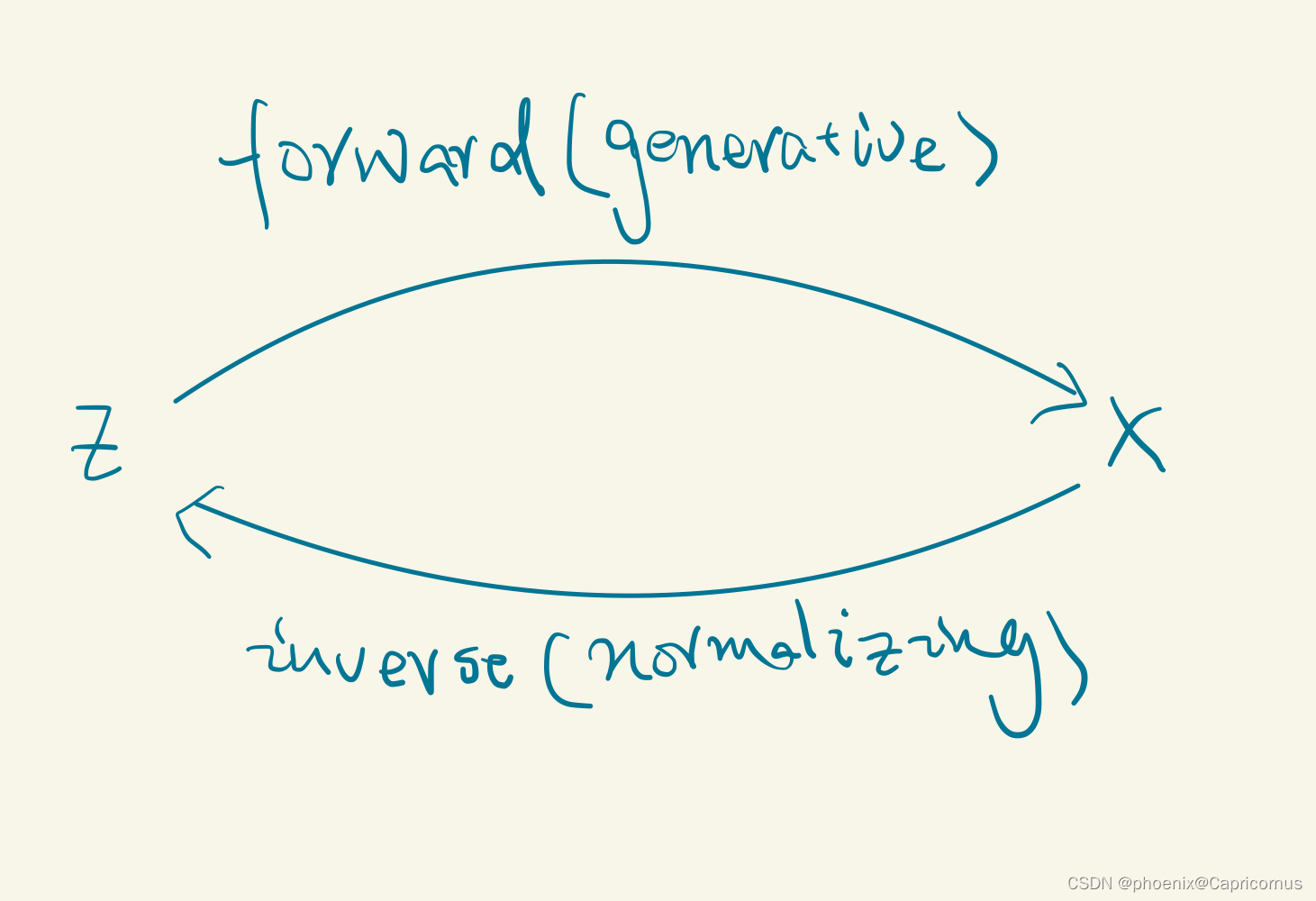

The forward mapping is sometimes termed the generative direction. The base density

is usually chosen to be a standard normal distribution. Hence, the inverse mapping is

termed the normalizing direction since this takes the complex distribution over

x

x

x and

turns it into a normal distribution over

z

z

z .

- 本质

Normalizing flows is the only model that can compute the exact log-likelihood of a new sample. Generative adversarial networks are not probabilistic, and both variational autoencoders and diffusion models can only return a lower bound on the likelihood.

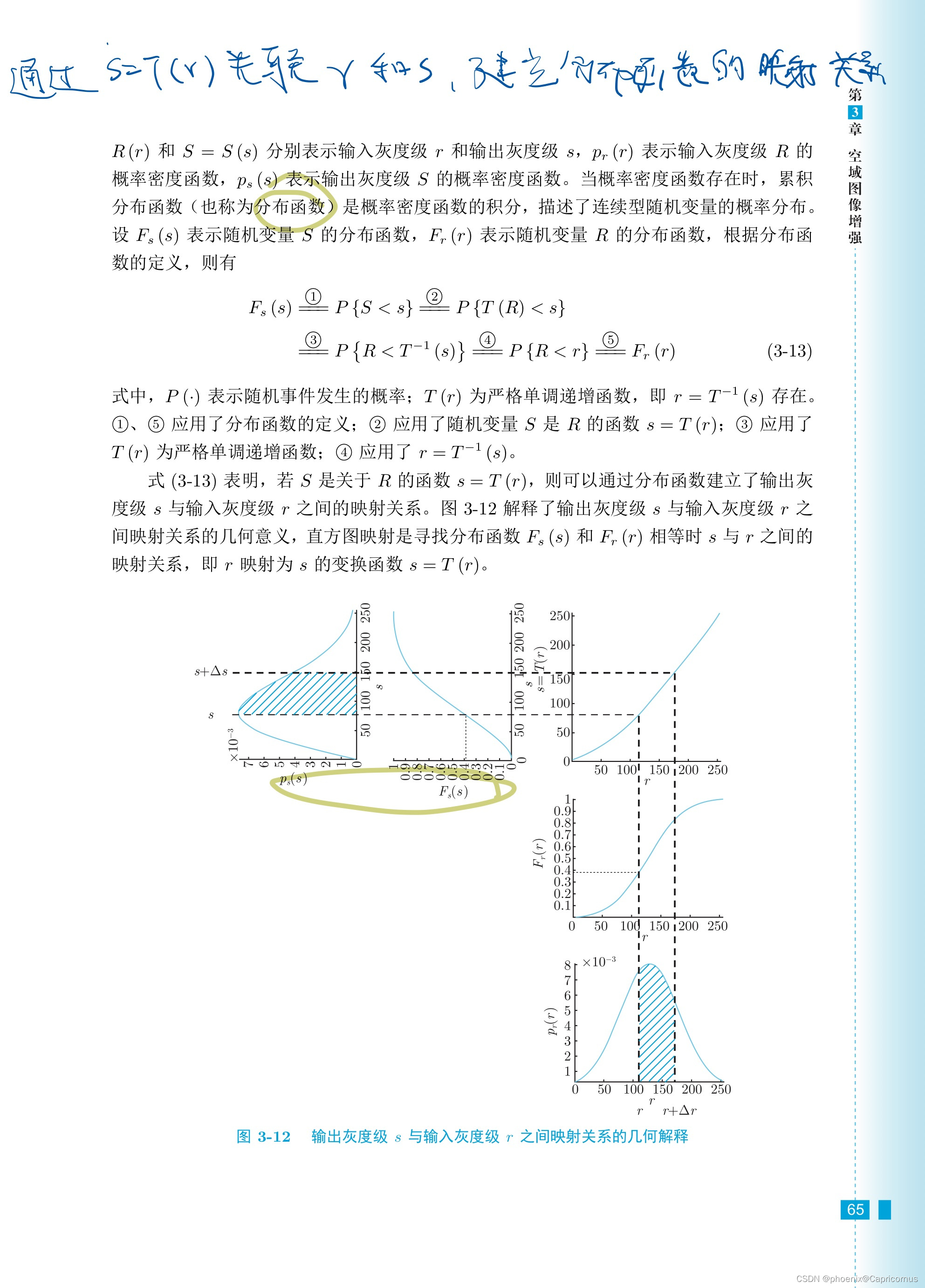

normalizing flows是分布到分布的映射,本质同直方图规定化,直方图本质是图像灰度的概率分布。

通过构造一个可逆的深度神经网络,并学习参数,然后通过正向映射过程近似概率分布或图像生成。

逆向的过程如同扩散模型正向加噪的过程,从复杂分布映射到简单的多元标准正态分布,异曲同工。